From ALongBit to AShortBit: Enhancing Test Efficiency in DevOps

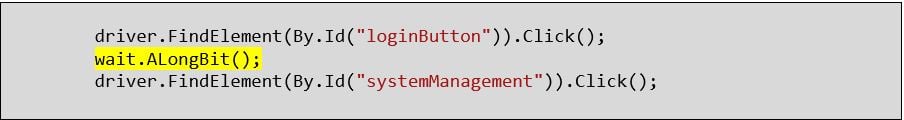

Even today, in the modern world of DevOps, I see this all too often in automated test code for regression tests:

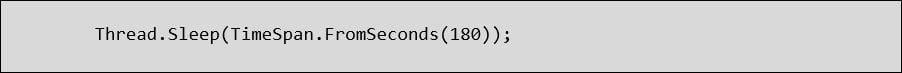

Maybe not disturbing on its own but when you dig into what ALongBit actually is, you find this horror:

OMG, what is this abomination and why would people do this? Thread.Sleep is basically putting the automated test to sleep while it waits for some external processing to complete. This is usually something to do with asynchronous processing, but what does this really mean? Well, in modern browser applications the user may click a button and this fires off an asynchronous request to the server, the user is free to carry on interacting with the page but then at some later time the server sends a response and the browser will render that response so that the user is aware. But hang on a second, hasn’t this always been the case? The answer is yes but there is some subtlety here; often in the past when the user pressed a button, a request to the server would be made and the user would wait as the server processes the request and returns the whole page as a response. This was useful from a Selenium automated test perspective as Selenium would typically wait for the page to be loaded before trying to access the next control. So, what is the problem? Now that we have asynchronous processing and the server is returning little “snippets” of html or Json to be rendered in the browser, Selenium cannot wait for the page to be loaded because it already is.

So back to our horrendous solution and why it is bad to just wait “a bit”. What I see is that whenever someone encounters this problem they implement a static wait for a short, medium or long timeframe, each time they do this it adds a fixed time to the duration of the test. So, for a test with 20 static waits, each wait taking say 2 minutes, the test will take whatever it takes plus 40 minutes. In the modern world of continuous delivery where we are focusing on DevOps principles and specifically pipelines to give us fast feedback, waiting 40+ minutes for a single test to complete is unacceptable. Sure, there are all kinds of sticking plasters you can apply like parallel running but none of them solve the underlying problem.

Don’t get me wrong, I am not saying that waiting is a bad thing, it is not if it is done right but waiting for a fixed time is. So, what is the solution? Well, it is simple really, don’t wait for a “long bit”, instead wait for a “short bit”. Let me expand, in these situations we should be dealing with “knowns”, so we should know what we are expecting on screen, the presence or state of some object that we want to interact with. So, what we do is set up a loop with a timeout which is the maximum time that we are prepared to wait (let’s say 1 minute) but in the loop we do a check for the presence or state of our object and then sleep for a very small amount of time (let’s say 20 milliseconds). We break out of the loop if we get the correct presence or state for our object. This gives us the best flexibility and the shortest possible time for the test to execute.

This does not just apply to UI tests but also to other integration tests, whether they be Rest APIs or Web Services, the same rules should apply to waiting. As part of our attention to Quality Assurance we want to get the fastest feedback loops possible and so keeping waits to a minimum is a must.

When these nasty waits appear in code it is common to see something else happen. Often they start as “AShortBit” and then at some point the test starts to fail again and so they turn into “ALongBit” and possibly even make it to “AReallyLongBit”. While we can apply our solution to reduce the overall time it takes a test to execute, it is worth lifting our heads for a bit so that we can be sure that nothing sinister is going on. We should question whether the solution is starting to exhibit performance issues, we should not ignore that in preference to getting the automated tests to go green.

So, there are a number of things to take away here, firstly we should not sacrifice Quality Assurance for the sake of getting automated regression tests passing. Secondly, we should not be employing a robot to sit about, idly doing nothing when we could get it working harder, a fifty minute test that spends 45 minutes waiting should start to ring alarm bells. I hope this was useful and look forward to seeing you in “ABit”!

.png)